The science of sharing dreams on social media

The idea of sharing our dreams on social media may sound like something out of a science fiction film. Imagine waking up in the morning and uploading a short video of your dream, much like posting a photograph or a story online. Surprisingly, some scientists believe this may not be entirely impossible in the distant future. The renowned theoretical physicist and futurist Michio Kaku has often suggested that advances in neuroscience and artificial intelligence could eventually make it possible to record and visualise human dreams. In such a future, dreams could be converted into digital media—reviewed, stored, and even shared.

Rapid Eye Movement and the Language of the Brain

To understand how such a technology might work, one must begin with the science of dreaming. Most dreams occur during a stage of sleep known as Rapid Eye Movement sleep (REM sleep). During this phase, the brain becomes highly active, closely resembling the patterns seen during wakefulness. Several regions—particularly the visual cortex and memory centres—generate complex electrical signals corresponding to images, emotions, and experiences.

When a person dreams of walking through a forest, meeting someone familiar, or flying through the sky, the brain produces neural patterns similar to those generated during real-life perception. If these signals can be measured and interpreted accurately, it becomes theoretically possible to reconstruct the visual content of dreams.

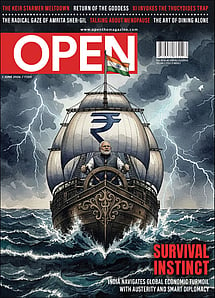

Survival Instinct

22 May 2026 - Vol 04 | Issue 72

India navigates global economic turmoil with austerity and smart diplomacy

Modern neuroscience has begun to explore this possibility. Brain-imaging technologies such as electroencephalography (EEG) and functional magnetic resonance imaging (fMRI) allow researchers to observe neural activity in remarkable detail. While these tools do not capture images directly from the mind, they provide rich datasets that reveal how the brain behaves when we see, imagine, or remember.

Machine Learning and Dream Reconstruction

However, recording brain activity is only the first step. The greater challenge lies in interpreting these signals. This is where artificial intelligence plays a transformative role.

Researchers train machine learning models by exposing participants to thousands of images—landscapes, objects, animals, and everyday scenes—while recording their brain activity. Over time, these systems learn to associate specific neural patterns with particular visual elements. Once trained, the models can analyse new brain signals and attempt to reconstruct what a person is seeing or imagining.

This approach has already been explored in pioneering studies at institutions such as Kyoto University and University of California, Berkeley. In several experiments, researchers successfully generated rough visual reconstructions from brain activity recorded while participants viewed images or videos.

Although these reconstructions remain blurry and incomplete, they demonstrate a crucial principle: elements of human visual experience can be decoded from neural signals. In some studies, scientists have also attempted to analyse dream content by comparing brain activity during sleep with participants’ post-waking descriptions, using iterative trial-and-error methods. These findings suggest that broad categories of dream imagery—such as people, vehicles, or buildings—can sometimes be identified.

From Neural Signals to Digital Dreams

If these techniques continue to evolve, future systems may be capable of producing far more detailed reconstructions. Advanced AI models could transform neural data into images, animations, or even short video sequences representing dream experiences. Once digitised, such content could theoretically be stored, edited, or shared—much like today’s photos and videos.

The development of brain–computer interfaces (BCIs) further strengthens this possibility. BCIs create a direct communication pathway between the brain and external devices, allowing neural signals to be captured with increasing precision. As this technology matures, it may enable more accurate interpretation of thoughts and visual experiences.

Technology companies are already investing in this frontier. One notable example is Neuralink, which is developing advanced brain–machine interfaces. While its current focus is on medical applications, such innovations could eventually contribute to dream-recording systems.

Ethical Questions and Future Possibilities

Despite its promise, dream-recording technology raises profound ethical concerns. Dreams often contain deeply personal memories, emotions, and subconscious thoughts. If such data could be recorded or accessed, issues of privacy, consent, and mental security would become critical.

Robust ethical frameworks would be essential to ensure that brain data remains protected and is used responsibly. Without such safeguards, the risks could outweigh the benefits.

Nevertheless, the concept of visualising dreams highlights the extraordinary pace of scientific progress. Advances in neuroscience, artificial intelligence, and computational power are steadily uncovering the complexities of the human brain. What once seemed impossible—interpreting thoughts or reconstructing mental imagery—is now an area of serious research.

The Future of Dreaming

Dreams have fascinated humanity for centuries, inspiring philosophers, poets, and scientists alike. Today, research into sleep and brain activity is transforming these mysterious experiences into measurable phenomena.

While the ability to record and share dreams remains distant, current progress suggests that the boundary between mind and machine may continue to blur. If future breakthroughs occur, the dream world may no longer remain entirely private or invisible. One day, people might wake up, watch a recording of their dreams, and share them with others—turning the most intimate inner experiences into a new form of digital storytelling.

What once existed only within the mind may eventually become a new kind of social media expression.

(This article is inspired by ideas from the book Physics of the Future by Michio Kaku.)